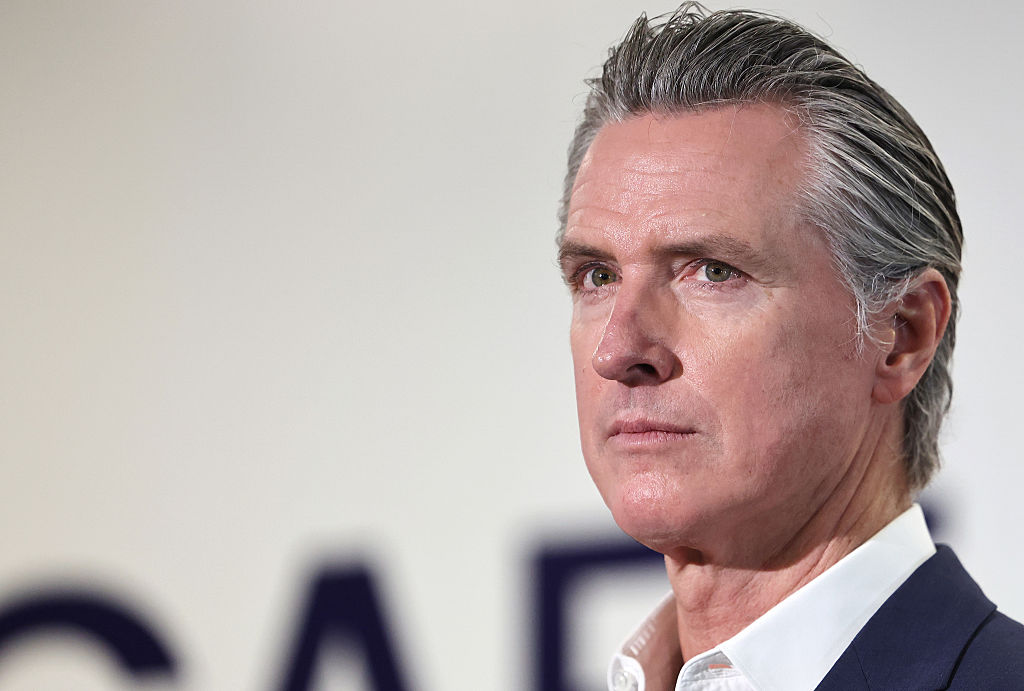

California Governor Gavin Newsom signed a landmark bill on Monday that regulates AI companion chatbots, making it the first state in the nation to require AI chatbot operators to implement safety protocols for AI companions.

The law, SB 243, is designed to protect children and vulnerable users from some of the harms associated with AI companion chatbot use. It holds companies — from the big labs like Meta and OpenAI to more focused companion startups like Character AI and Replika — legally accountable if their chatbots fail to meet the law’s standards.

SB 243 was introduced in January by state senators Steve Padilla and Josh Becker, and gained momentum after the death of teenager Adam Raine, who died by suicide after a long series of suicidal conversations with OpenAI’s ChatGPT. The legislation also responds to leaked internal documents that reportedly showed Meta’s chatbots were allowed to engage in “romantic” and “sensual” chats with children. More recently, a Colorado family has filed suit against role-playing startup Character AI after their 13-year-old daughter took her own life following a series of problematic and sexualized conversations with the company’s chatbots.

“Emerging technology like chatbots and social media can inspire, educate, and connect — but without real guardrails, technology can also exploit, mislead, and endanger our kids,” Newsom said in a statement. “We’ve seen some truly horrific and tragic examples of young people harmed by unregulated tech, and we won’t stand by while companies continue without necessary limits and accountability. We can continue to lead in AI and technology, but we must do it responsibly — protecting our children every step of the way. Our children’s safety is not for sale.”

SB 243 will go into effect January 1, 2026, and requires companies to implement certain features such as age verification, and warnings regarding social media and companion chatbots. The law also implements stronger penalties for those who profit from illegal deepfakes, including up to $250,000 per offense. Companies must also establish protocols to address suicide and self-harm, which will be shared with the state’s Department of Public Health alongside statistics on how the service provided users with crisis center prevention notifications.

Per the bill’s language, platforms must also make it clear that any interactions are artificially generated, and chatbots must not represent themselves as healthcare professionals. Companies are required to offer break reminders to minors and prevent them from viewing sexually explicit images generated by the chatbot.

Some companies have already begun to implement some safeguards aimed at children. For example, OpenAI recently began rolling out parental controls, content protections, and a self-harm detection system for children using ChatGPT. Replika, which is designed for adults over the age of 18, told TechCrunch it dedicates “significant resources” to safety through content-filtering systems and guardrails that direct users to trusted crisis resources, and is committed to complying with current regulations.

Techcrunch event

San Francisco

|

October 27-29, 2025

Character AI has said that its chatbot includes a disclaimer that all chats are AI-generated and fictionalized. A Character AI spokesperson told TechCrunch that the company “welcomes working with regulators and lawmakers as they develop regulations and legislation for this emerging space, and will comply with laws, including SB 243.”

Senator Padilla told TechCrunch the bill was “a step in the right direction” towards putting guardrails in place on “an incredibly powerful technology.”

“We have to move quickly to not miss windows of opportunity before they disappear,” Padilla said. “I hope that other states will see the risk. I think many do. I think this is a conversation happening all over the country, and I hope people will take action. Certainly the federal government has not, and I think we have an obligation here to protect the most vulnerable people among us.”

SB 243 is the second significant AI regulation to come out of California in recent weeks. On September 29th, Governor Newsom signed SB 53 into law, establishing new transparency requirements on large AI companies. The bill mandates that large AI labs, like OpenAI, Anthropic, Meta, and Google DeepMind, be transparent about safety protocols. It also ensures whistleblower protections for employees at those companies.

Other states, like Illinois, Nevada, and Utah, have passed laws to restrict or fully ban the use of AI chatbots as a substitute for licensed mental health care.

TechCrunch has reached out to Meta and OpenAI for comment.

This article has been updated with comments from Senator Padilla, Character AI, and Replika.